The question in the headline came to mind after reading this tweet from the Chief Medical Officer (whose twitter use, it must be said, appears to have substantially increased since Government banned him from going on the radio without permission. Make of that, what you will):

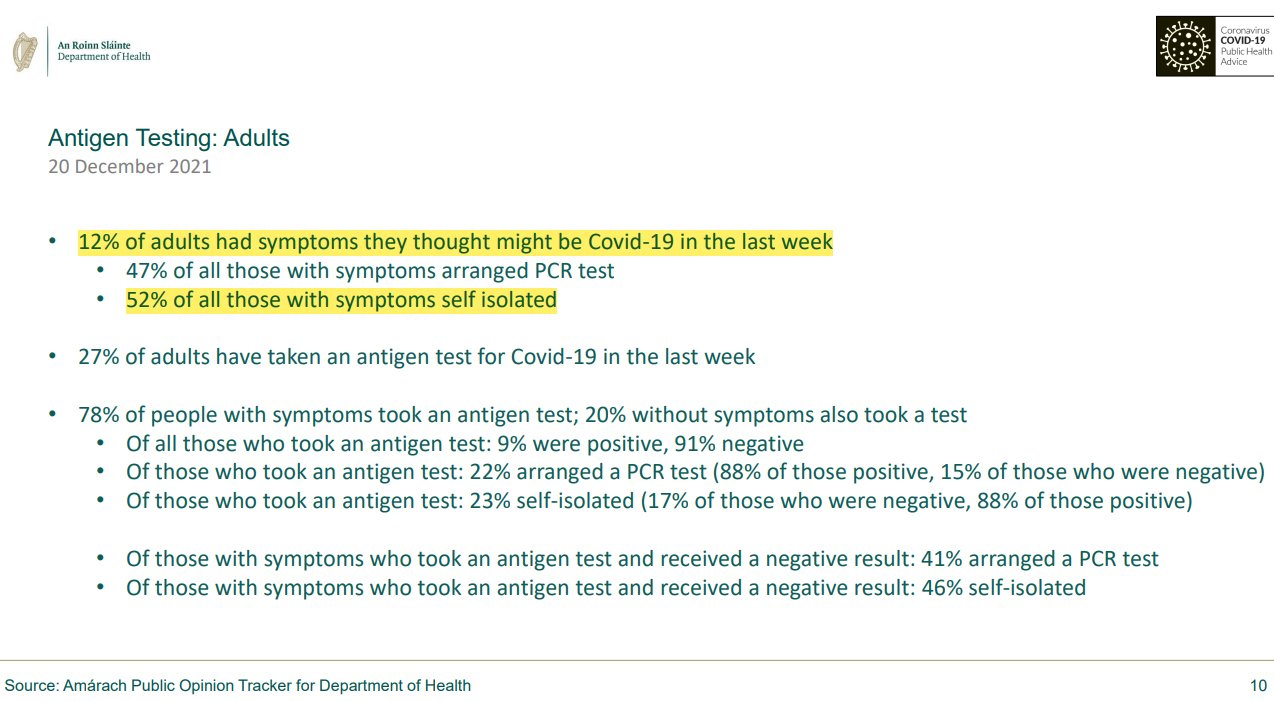

It's also a concern that our latest Amarach tracking data shows that only half of people with symptoms are isolating.

— Dr Tony Holohan (@DrTonyHolohan) December 20, 2021

This is a startling claim. A full half of people with Covid symptoms not isolating? There are times when data does not tally with what a reasonable observer might assume to be true, and reveals that some assumptions were wrong. This is the value of polling. But there are also times when the data does not tally with what we might assume to be true, and reveals the limitations of polling. There are grounds to suspect that this is the latter case. Why?

Well, NPHET uses Amárach Research to conduct its polls. Amárach is an excellent polling company – this writer has employed them, and worked with them, in the past. There is no doubt that the data they are providing NPHET with is accurate.

But data can be both accurate, and misleading, in a number of ways.

For example, let’s look at the breakdown here, in terms of sample sizes. We know that the full sample size of NPHET’s most recent tracking poll was 1,300 people. That is more than big enough to give what we call a “representative sample”. The problems arise when you get into sub samples:

As we see here, twelve per cent of adults had covid symptoms in the last week. That means, out of 1,300 people polled, roughly 156 reported symptoms. That also means that the poll of people who reported symptoms in the last week, and were asked whether they self isolated, is a poll of… about 156 people. The margin for error on a poll of 1,300 people is three per cent. When you get down to 156 people, the margin for error can rise to almost 10%. Such sub samples are not entirely useless, but they should also be treated with extreme caution.

But that is not the only problem.

There are also problems with how questions are asked, in what sequence they are asked, and how people might interpret them.

For example, a person might be asked in question one: Did you have covid symptoms in the past week? And then, in question two, asked: Did you self isolate in the last week? If a person simply answers yes to question one, and no to question two, then you get “person with covid symptoms did not self isolate”.

The problem is that life is rarely that simple. For example, a person may well have covid symptoms, take a multitude of antigen and PCR tests, and discover that they do not, in fact, have covid. Or they may have had those symptoms the preceding week, and been recovering, and know that they did not have covid.

In both circumstances, a person might reasonably feel, since they do not have covid, that full blown self-isolation is not necessary, and they are still free to go to the shops and get milk.

The problem, in short, is that the headline data only gives you the answer to two questions, and not the answer to all the other relevant questions that a person might want to ask, to investigate a startling claim like this.

There is also significant real world data that backs up the more benign interpretation of the polling result: Twelve per cent of Irish people say they have experienced covid symptoms. It is fair to assume that people with symptoms are most likely to get tested. In the last week, Ireland has experienced about 30-40,000 positive covid test results. In other words, about 1% of the population has actually tested positive.

Despite this, NPHET’s own polling shows that nearly 6% of the population were self isolating. This actually suggests that people are being more cautious than their own symptoms warrant, overall.

If you look at all of this in the round, it’s relatively clear that the polling result in question is not much cause for concern.

In this case, however, the Chief Medical Officer, and NPHET, do not seem to have looked past the rather startling headline figure to give consideration to all the potential explanations, related to the sample, and the survey structure, which might have prompted that result in the polling.

Perhaps that is because they do not understand polling – they are medics, after all, not statisticians. Perhaps it is because they did not question it, or probe the reasons for it beyond looking at the headline. Perhaps they simply trusted the results from their pollster – who is, after all, not to blame for asking a question and providing the results.

In any case, Dr. Holohan was quick to highlight this result, and put a negative spin on it. The problem, as ever, is that he seems to have been quite unwilling, or unable, to consider that the result was both slightly misleading, and not nearly so bad as the figures might initially look.