At the risk of outing myself as an incurable Luddite, I have yet to get aboard the Artificial Intelligence (AI)-hype train. I haven’t consulted ChatGPT even once, to my recollection, and the extent of my broader engagement with similar AI systems is pretty much accidentally clicking on them when I open Skype, Adobe or some other programme that’s worked an AI-assistant into its interface.

Try as I might, I just can’t shake the deeply-rooted suspicion that in exchange for the convenience that it undeniably affords us, we offer up something incalculably more valuable like our ability to persist in research, or our attention span or something else along those lines in return. It’s not a crazy fear – having the internet at our fingertips courtesy of the smartphone has done a hell of a job on our capacity for considered reflection. Not two seconds have gone by after a question pops into our heads before our phones are in our hands and we’re away and asking something else for the answers.

All of that said, news like that recently out of Nigeria’s Edo State does a lot to temper my characteristic opposition to these technological flourishes.

A few months back, a team of specialists in a variety of relevant fields wrote a blogpost for the World Bank about an experiment undertaken in Nigeria’s southern Edo State to try and gauge what sort of effect incorporating free generative AI tools (such as ChatGPT or similar) into education could have on learning outcomes.

This was done because of the long-held understanding that one-on-one tutoring is extraordinarily beneficial for students. That ideal isn’t easily achieved – as we know all too well in Ireland with our overburdened teacher-student ratios – but with the advent of AI tools (specifically, Large Language Models, or LLMs) capable of interacting with students individually and reacting to their learning level and needs, questions of costs and resources are less an obstacle than ever before.

On the initiative itself, the blogpost reads:

“Over June and July 2024, 800 first-year senior secondary students attended after-school English classes in computer labs twice a week. Each session began with the teacher introducing the week’s topic, followed by students interacting with Microsoft Copilot, a generative AI tool powered by ChatGPT, to master the selected topics comprising both grammar and writing tasks. Acting as “orchestra conductors” of the pilot, the teachers guided students in using the LLM, starting each session with a suggested prompt. As the students interacted with the AI, the teachers mentored them, offering guidance and additional prompts. They also led brief reflection exercises at the end of each session.”

A number of fairly standard lessons were drawn following the implementation of the initiative, such as the importance of robust infrastructure, like internet and electricity supply, that can survive harsh weather conditions, and the necessity of reducing the risks that come hand-in-hand with AI: “overreliance, hallucination (generating false responses and presenting them as facts), and misuse”.

However, in recent days the preliminary findings of the exercise have been published, and if representative of the wider potential these tools possess, they indicate that AI could revolutionise education if properly implemented (I write that aware that that’s a big “if”). As noted by the authors, there are serious risks that need to be addressed to ensure that AI-supplemented education doesn’t become a crutch, or a pathway to faulty thinking.

Regardless, in the recent update, the monitoring specialists revealed that the programme “boosted learning across the board”.

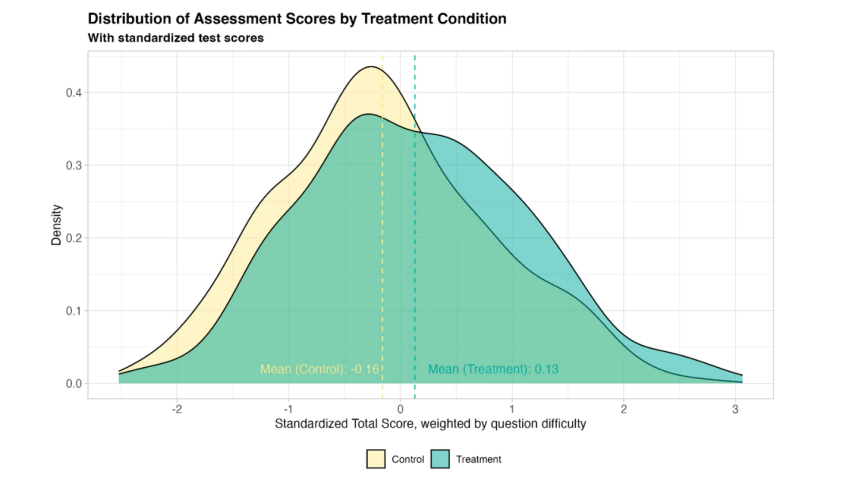

“The results of the randomized evaluation, soon to be published, reveal overwhelmingly positive effects on learning outcomes. After the six-week intervention between June and July 2024, students took a pen-and-paper test to assess their performance in three key areas: English language—the primary focus of the pilot—AI knowledge, and digital skills.

“Students who were randomly assigned to participate in the program significantly outperformed their peers who were not in all areas, including English, which was the main goal of the program. These findings provide strong evidence that generative AI, when implemented thoughtfully with teacher support, can function effectively as a virtual tutor.”

The benefits reportedly extended beyond those anticipated by the scope of the programme, as those students who took part performed better on their regular end-of-year exams than those who didn’t. Very interestingly, those exams weren’t limited to the topics focused on as part of the AI-boosted learning, indicating to the observers that “students who learned to engage effectively with AI may have leveraged these skills to explore and master other topics independently”.

It was also found that the deeper the engagement, the greater the gains. Those students with the highest attendance saw greater gains than those who attended less frequency, and those gains didn’t “taper off” as the programme progressed, suggesting that there’s real scalable potential there. This was coupled with a third finding that the “learning gains” weren’t just marginal, but significant – equivalent, as the authors put it, “to nearly two years of typical learning in just six weeks”.

When the results of this intervention were compared with those of other educational initiatives, it was found to be more effective than 80 percent of them, “including some of the most cost-effective strategies”.

Lots of food for thought on the heels of what is likely one of the first such studies on the role of generative AI as tutor in the school setting. Before – potentially – fundamentally changing the face of education, though, I am glad to see at least some of the researchers involved are aware that there are more questions to be asked regarding such things as the long-term effects of such interventions and whether there are any negative, undesired effects that haven’t yet manifested themselves.

Suffice to say, my slow-moving nature has not changed and my stance remains one of scepticism, but I find it difficult to contend with the reality that generative AI may have its genuinely humanitarian uses such as that discussed above. Taking my lead from my faith as I try to do, I imagine it’s another case of the weeds and the wheat: good and bad uses and effects of this novel technology coming into being alongside one another.